Reading between the lines

A summary of the third chapter of You Don't Know What You're M ss ng

Heard melodies are sweet, but those unheard are sweeter - John Keats, ‘Ode on a Grecian Urn’.

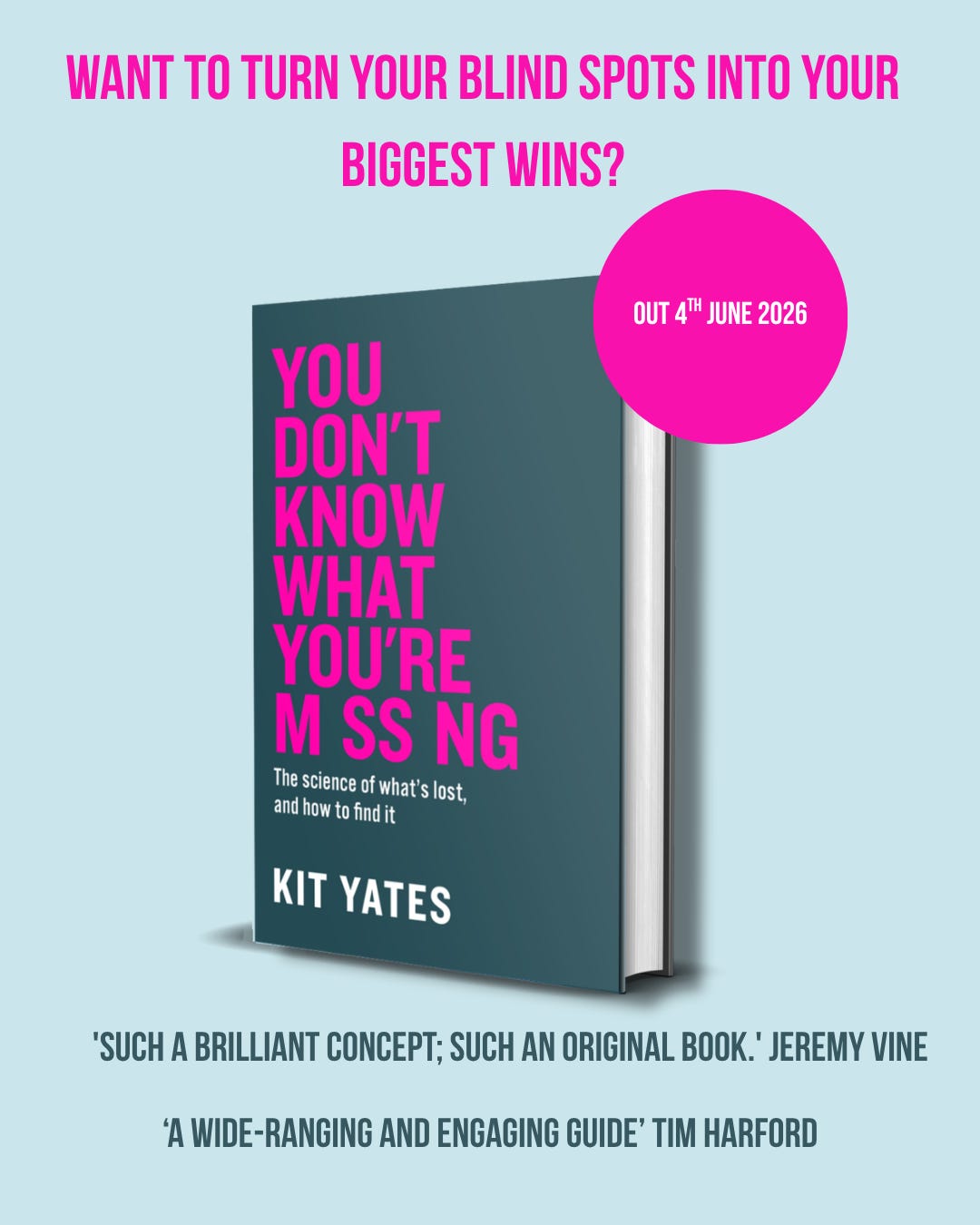

First off, I wanted to say a massive thanks to all of you who’ve already gone and pre-ordered a copy of the book. Your support means so much to me. As you’ll probably know by now, I’m writing a series of posts summarising the chapters of my new book, You Don’t Know What You’re M ss ng, ahead of publication on 4th June. The aim is to give you a feel for the shape of the argument, the kinds of stories I use in the book to get the message across, and the ideas I hope will stick with you long after you’ve finished reading it.

In this post I’m going to summarise Chapter 3, Reading between the lines.

It mhigt spusiere you to konw taht dispete the fcat I hvae jbumled up all the ltteres in the mddile of the wdros in tihs scetnene you can sltil raed it woutiht mcuh erffot: as lnog as the fsirt and lsat ltteres are in the rghit pacle you can wrok it out. 51M1L4RLY, 3V3N 1F 50M3 L3773R5 4R3 5UB5717U73D F0R NUMB3R5 Y0U C4N R34D 7H15 53N73NC3 W17H0U7 3V3N BR34K1NG 57R1D3. P#rh#ps #v#n m#r# s#rpr#s#ng #s th#t #f #ll th# v#w#ls #n th# s#nt#nc# g# m#ss#ng #nd #r# r#pl#c#d w#th #n#ther ch#r#ct#r y## c#n st#ll j#st ab##t r##d #t. It was harder, but you could still manage, right?

We are incredibly adept at decoding garbled messages, in which seemingly important linguistic structures are missing. One version of this is disemvoweling: a technique internet moderators sometimes use to tread the fine line between overzealous censorship and an unregulated free-for-all. It renders potentially offensive posts less objectionable, while ensuring readers c#n st#ll r##d th#m #f th#y r##lly try. What I’m really concerned about in this chapter, though, is what this says about how our brains operate when confronted with missing information.

A big part of the answer is context. The words in a message prime us to expect particular words to come next. Even if a word is ambiguous because we haven’t looked at it carefully or it has become corrupted, context combined with out prior experiences can allow us to interpret what it should be. At a finer scale, the letters of a word prime us to expect other letters. If you see a “q” you’re already leaning towards “u” coming next before you even get to it.

But it isn’t only the context that comes before the missing piece that’s important. Often it’s the context that comes after that helps us out. With heavily jumbled text, you might not truly parse each word as you encounter it. You can feel, in hindsight, as if you understood everything smoothly, when in reality later words have allowed your brain to go back and fill in gaps you didn’t even notice were there. Reading isn’t just a predictive process; it’s also a repair job.

I’ve had first-hand insight into this while helping my son learn to read. A couple of years ago, he reached the stage where he no longer needed to sound out every letter in order to parse a word. He lets context help him out. If he gets stuck, he knows that reading on often resolves the ambiguity. That is a tremendous advantage. It speeds up reading dramatically. But it comes with a familiar trade-off: the more your brain relies on expectation, the more it risks seeing what it expects rather than what is actually on the page. Which is why proof-reading our own work is so difficult.

When re-reading, we tend to automatically interpret many of our own mistakes as correct. We know what we meant to write, so we expect to see the correct form on the page when proofing, and this is often what we end up “reading”. I sometimes type “form” when I mean “from”. Word doesn’t flag it because it isn’t a spelling error. And I often don’t spot it because my brain knows what it is expecting to see and that is what it sees. I’ve resorted to searching for both words and checking each instance manually.

This general phenomenon also explains why certain “spot the mistake” puzzles are so effective. Try this one one.

70% per cent of the public can’t spot the mistake in this text.

Apart from it probably being poor English to start a sentence with a number in digit form, did you manage to spot the mistake? I certainly didn’t the first time I read it. The answer is the repetition of % and per cent. Our brains tend to simply filter this out. Even when you are primed to catch mistakes it isn’t always easy to spot them.

We miss repeated little words. We glide straight past a duplicated “the” or an extra “a”. We can stare at a sentence and confidently feel we have inspected it, when our brains have actually filtered out exactly what we were trying to catch. Even when you are primed to detect errors, your mind is still optimised for extracting meaning, not for conducting forensic audits.

The same is true for sound. In this chapter we spend some time looking at the ways we mishear and misinterpret language, not because we are careless, but because we are trying to make sense of noisy signals in real time. Mondegreens and eggcorns are the harmless, funny end of this spectrum: plausible substitutions that fit the context and make a surprising kind of sense. They are reminders that, while context can help us interpret missing parts of language, it can also lead us astray.

Sometimes the consequences are not funny at all, but deadly serious. Under the wrong conditions, an ambiguous phrase or a misheard command can become fatal. When people are anxious, excited, frightened, or simply primed to expect one thing over another, they can fill in the gaps with the wrong word and not even realise they have done so. This is one of the uncomfortable themes of the chapter, that misunderstanding can feel, from the inside, exactly like understanding. Sometimes we don’t even know we are making a mistake.

All of this pushes us towards a natural question: if the brain is constantly filling gaps, how does it decide what to fill them with?

One possible explanation for how our brains reason under uncertainty is called the Bayesian Brain Hypothesis. Many neuroscientists think it’s useful to treat the brain as a statistical organ: one that combines prior knowledge with new evidence in order to reach the best current guess about what is happening. This is sometimes called Bayesian integration. The basic logic is simple. Your prior experiences give you a set of expectations about what is likely. Incoming information nudges those expectations. The brain updates.

The Bayesian Brain Hypothesis helps explain why we can read meaning into degraded text, why we can often reconstruct what someone said in a noisy room, and why we can predict the next word in a sentence. It also helps explain why we make systematic errors. Sometimes our prior expectations are too strong. Sometimes they are applied in the wrong domain. Sometimes we mistake familiarity for predictive power and end up with the cognitive equivalent of the gambler’s fallacy: feeling that the world “ought” to balance out in the short term, even when it doesn’t work that way.

The brain (Bayesian or otherwise) is a fantastic tool. It often allows us to draw correct inferences from incomplete information. But it is so fluent at filling in gaps that we are sometimes not even aware that anything is missing. And if you don’t realise something is missing, you don’t realise you might have filled it in incorrectly.

A favour: pre-order the book

If you’ve enjoyed this summary, you’ll find much more detail in the book itself, with the stories, the science and the slightly uncomfortable implications.

If the book sounds like your sort of thing, please consider pre-ordering it. Pre-orders matter far more than most readers realise. They’re one of the strongest early signals that a book has an audience, which influences everything from how many copies are stocked to how widely it’s recommended.

Amazon: https://www.amazon.co.uk/Dont-Know-What-Youre-Missing/dp/1529438039

Bookshop.org (supports independent bookshops): https://uk.bookshop.org/p/books/you-don-t-know-what-you-re-missing-the-science-of-what-s-lost-and-how-to-find-it-kit-yates/497ab9dcf971763a

Thanks,

Kit